An algorithm is a clearly defined, step-by-step set of instructions designed to solve a problem or perform a specific task. In computing, algorithms provide the logical foundation that computers follow to process data, make decisions, and generate output. Every computer program, application, and digital system relies on one or more algorithms to function correctly.

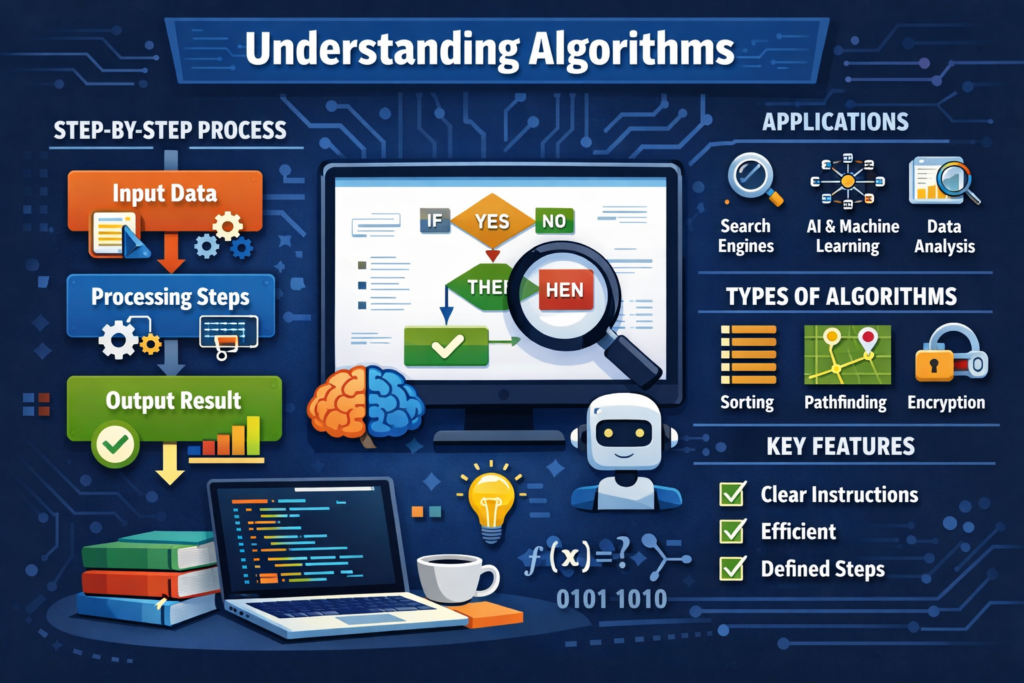

Algorithms take input, process it through a series of logical steps, and produce an output. These steps must be precise, unambiguous, and finite, meaning the algorithm must eventually come to an end. A well-designed algorithm is not only correct but also efficient, using the least possible time and resources to achieve its goal.

Algorithms are widely used across many fields beyond computer science. In mathematics, they are applied to calculations and problem-solving. In business and finance, algorithms power trading systems, risk assessment models, and fraud detection tools. In artificial intelligence and machine learning, algorithms enable systems to learn from data, recognize patterns, and make predictions. Search engines, recommendation systems, navigation apps, and social media platforms all rely heavily on algorithms to deliver relevant and personalized results.

There are many types of algorithms, each designed for specific purposes. Common examples include sorting algorithms that organize data, searching algorithms that locate information, encryption algorithms that secure data, and optimization algorithms that find the best solution among many possibilities. Algorithms can be simple, such as following a recipe, or highly complex, such as those used in autonomous vehicles and advanced data analytics.

In modern digital society, algorithms play a crucial role in automation, efficiency, and innovation. Understanding how algorithms work is essential for software development, data science, cybersecurity, and technology-driven decision-making.